Text-to-Shape Display: Dynamic Shape Changes via Natural Language Commands

Abstract

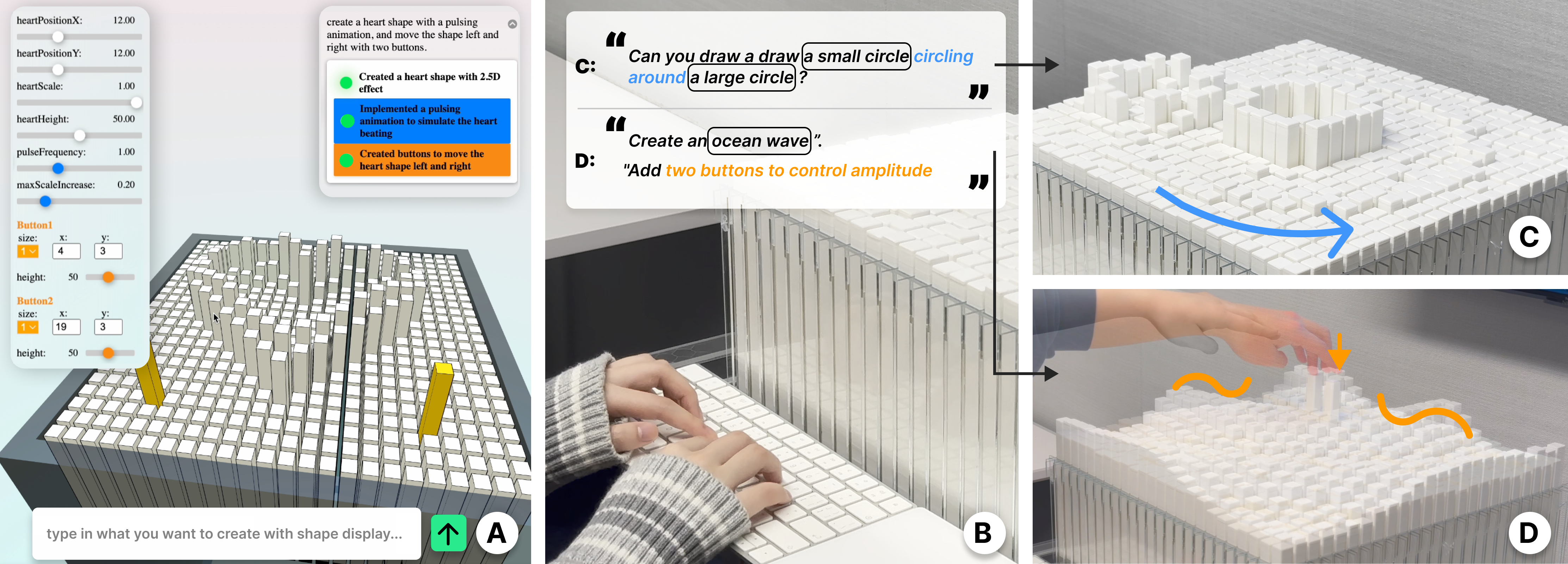

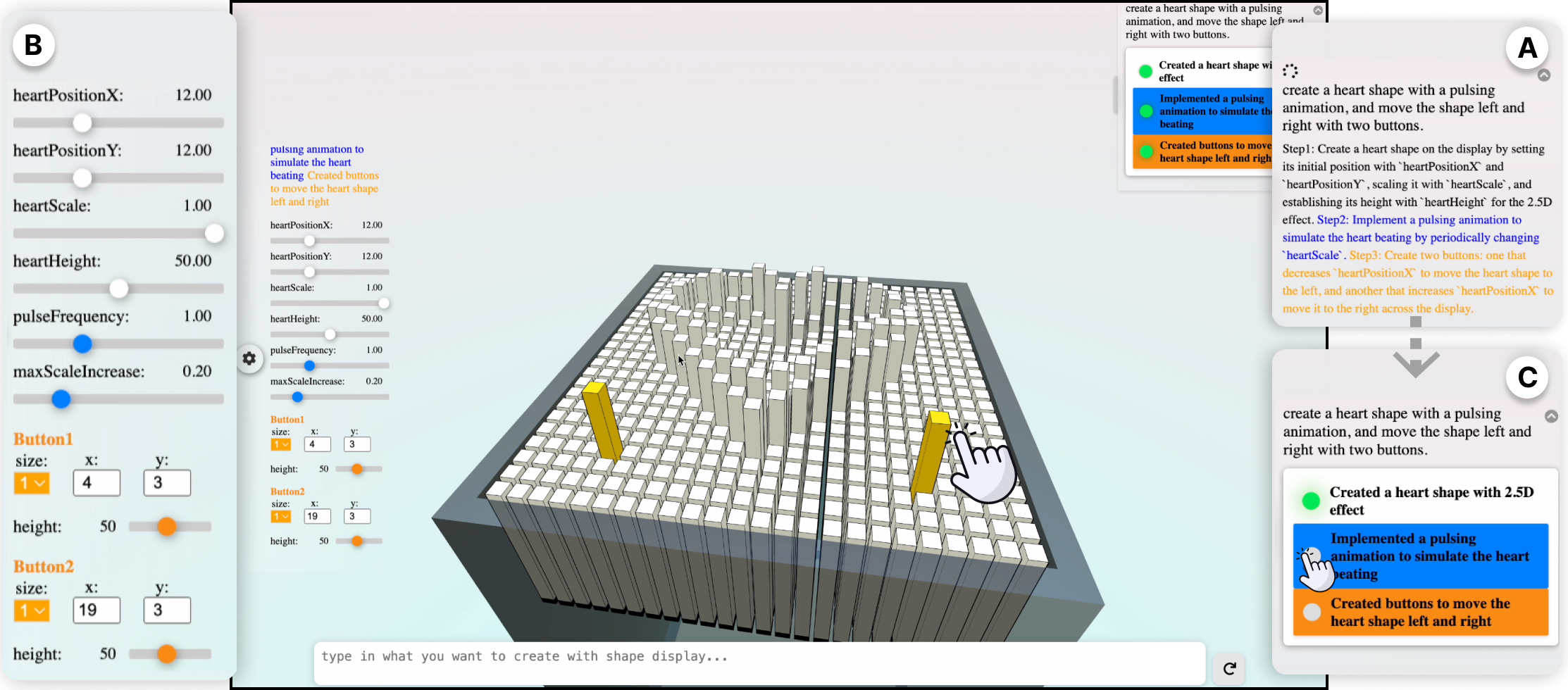

This paper introduces text-to-shape-display, a novel approach to generating dynamic shape changes in pin-based shape displays through natural language commands. By leveraging large language models (LLMs) and AI-chaining, our approach allows users to author shape-changing behaviors on demand through text prompts without programming. Key generative elements (primitive, animation, and interaction) are identified, and design requirements for enhancing user interaction are explored based on formative and iterative design processes.

We present SHAPE-IT, an LLM-based authoring tool for a 24 x 24 shape display, which translates textual commands into executable code. The tool allows rapid exploration through a web-based control interface. Evaluations, including performance metrics and a user study with 10 participants, reveal SHAPE-IT’s promise in enabling rapid ideation of diverse shape-changing behaviors. However, the findings highlight accuracy challenges and limitations, paving the way for further refinement of the framework to better suit shape-changing systems.

Contribution

- Introduced text-to-shape-display, integrating LLMs and AI-chaining for authoring shape-changing behaviors in pin-based shape displays through natural language commands.

- Defined foundational generative elements (primitive, animation, and interaction) to support system design and user interaction.

- Developed SHAPE-IT, a web-based authoring tool that translates natural language inputs into executable code for a 24 x 24 pin-based shape display.

- Demonstrated the potential for rapid, on-demand ideation of dynamic shapes and animations without requiring user programming.

Implementation

- Designed a software system integrating natural language processing with shape-changing hardware.

- SHAPE-IT supports the creation of dynamic shape-changing behaviors by translating natural language commands into control scripts.

- Utilized a web-based interface for real-time interaction and control of the 24 x 24 pin-based shape display.

Evaluation

- Performance Evaluation: Assessed SHAPE-IT’s ability to generate accurate and dynamic shape-changing behaviors from varying text complexities.

- User Evaluation: Conducted a study with 10 participants, exploring SHAPE-IT’s usability, versatility, and the effectiveness of natural language-based interaction.

- Results showed the system’s promise in enabling rapid ideation but identified accuracy-related challenges requiring future improvements.