Language-Guided Multimodal Texture Authoring

Accepted to the IEEE Haptics Symposium and honored with the Distinguished Technical Long Paper Award.

Abstract

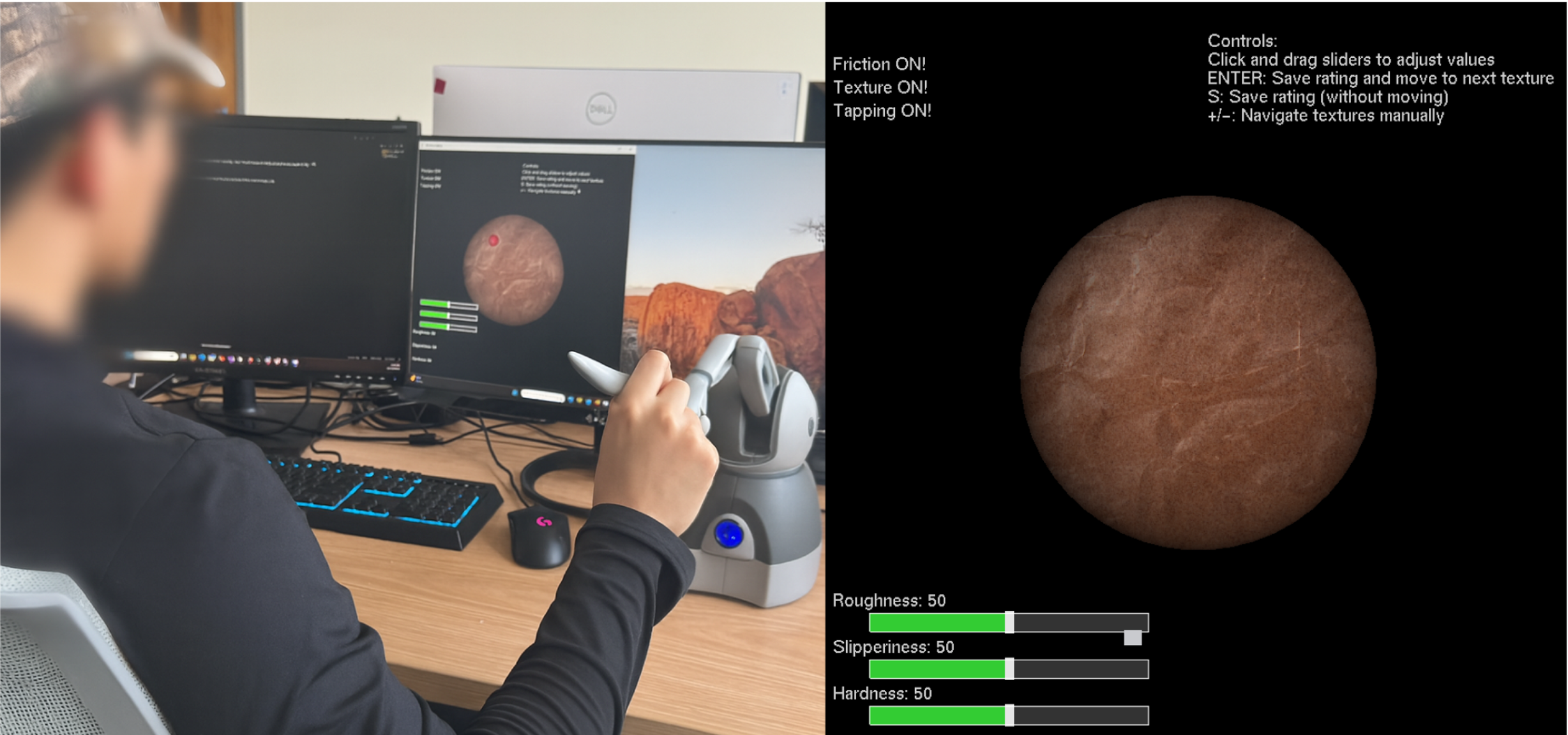

Authoring realistic haptic textures often requires low-level parameter tuning and repeated trial-and-error, which slows iteration and limits creative exploration. This work introduces a language-driven authoring system that turns natural-language prompts into multimodal textures: coordinated haptic signals (sliding vibrations generated by force/speed-conditioned autoregressive models and tapping transients) and a text-prompted visual preview from a diffusion model. A shared, language-aligned latent space links modalities so a single prompt yields semantically consistent haptic and visual output; designers can specify goals like “gritty but cushioned” or “smooth hard metal” and immediately see and feel the result through a 3D haptic device. An anchor-referenced, attribute-wise evaluation for roughness, slipperiness, and hardness shows perceptually meaningful structure in the latent, with trends reflecting asperity, compliance, and surface-film effects. A human-subject study further indicates coherent cross-modal experience and low effort for prompt-based iteration. Overall, the results show language as a practical control modality for texture authoring, enabling prompt-first, designer-oriented workflows that replace manual parameter tuning with interpretable, text-guided refinement.

Contributions

- Language-driven multimodal texture authoring that unifies haptic signals and visual previews from a single prompt.

- A shared, language-aligned latent space that preserves semantic consistency across haptic and visual channels.

- User studies and attribute-wise evaluations demonstrating perceptually meaningful control over roughness, hardness, and slipperiness.